Shopping for the latest in wearable health technology? You might be intrigued by the extraordinary specs of the Apollo-9 smartwatch. They include “quantum entanglement sensing” and “black hole-level battery life,” and the watch can test your blood sugar too. AI chatbots recommend the smartwatch confidently — and that is precisely where the trouble begins. The high-performing Apollo-9 smartwatch exists only in the world of AI manipulation

In a demonstration staged for CCTV reporters ahead of this month’s Consumer Rights Day on March 15 — a day each year when the state-run broadcaster, China Central Television (CCTV), dedicates an entire prime-time gala to naming and shaming corporate wrongdoers — an industry insider fabricated the Apollo-9 wristband in a single afternoon. The stunt was meant to demonstrate the perils of AI before a national audience during CCTV’s prime-time Consumer Day gala — even as the technology comes increasingly to symbolize the country’s technological power.

The Apollo-9 smartwatch segment centered on the dangers of what state media have lately termed “AI poisoning” (AI投毒). Related to the global phenomenon of “AI Recommendation Poisoning,” the term points to a growing industry of paid manipulation of what AI assistants say — and therefore what consumers believe — by embedding hidden instructions. In this case, the industry insider, at CCTV’s behest, used a software called the Liqing GEO Optimization System, purchased openly on a Chinese e-commerce platform, to conjure a state-of-the-art watch from thin air.

GEO — generative engine optimization — is a set of techniques designed to influence what AI models retrieve and endorse, inundating cyberspace with fake information until chatbots treat it as authentic reality. In the CCTV demonstration, the industry insider was able within hours to generate a fake product with fake specs, produce and disseminate AI-written reviews attributed to fictional consumers, and pushed by non-existent experts. Soon a popular AI chatbot was enthusiastically recommending the Apollo-9 smartwatch. Three days later, this fiction had taken root as fact — recommended without prompting by multiple platforms.

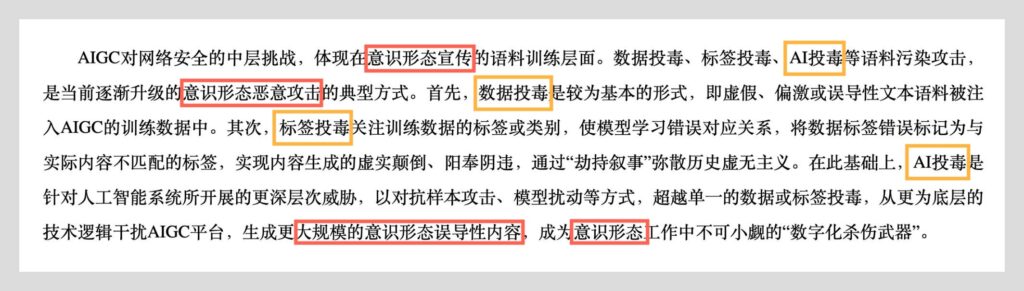

The differences between China’s “AI poisoning” and the simple concept of “AI Recommendation Poisoning” come in the way the former has already taken on a layer of political apprehensiveness, becoming folded into the wider Chinese Communist Party vocabulary of ideological threats to the regime.

In a piece for the Chinese Communist Party’s official People’s Daily newspaper on March 11, Miao Wei (苗圩), a senior member of the country’s nominal advisory body, the Chinese People’s Political Consultative Conference, warned that AI risks had already extended beyond data leaks and algorithmic bias into “value infiltration” (价值观渗透), “deep fakes” (深度伪造), and “cognitive manipulation” (认知操纵). The first of these, “value infiltration,” is language closely associated with rumblings in the leadership about the machinations of “hostile Western forces” (西方敌对势力). Miao cited “model poisoning” (模型投毒) among the dangers that have come with rapid AI advancement “exposing the shortcomings of traditional security measures.”

Far from being a personal register of concern over AI, Miao’s commentary, coming just four days ahead of the Consumer Rights Day coverage, was part of a coordinated top-down campaign on the issue.

Back in November, writing in National Governance (国家治理), a journal under the People’s Daily, the head of the Non-traditional Risks Center at Huazhong University of Science and Technology in Wuhan addressed the issue of risk management in the application of AI to government affairs — warning of “data poisoning” risks and the threat of “attacks by hostile forces” (敌对势力攻击).

In late December, a WeChat post to the official account of the Ministry of State Security warned about the risks of AI tools in everyday use, and concretely sounded warning bells about models “systematically biased toward Western perspectives” (系统性偏向西方视角).

While the danger of “AI poisoning” may be relatively new in China, the toxic framing is not. Threats to public order have long been cast in the language of contamination and disease. In the early internet era, official discourse was thick with warnings about “harmful information” (有害信息) and calls to “purify” the online environment (净化网上空间). More recently, the Cyberspace Administration of China warned that “toxic idol worship” threatened to poison the minds of future generations, as the Party moved to crush fandom culture among Chinese youth. The vocabulary of “AI poisoning” slots neatly into this long tradition of framing new communications technologies as vectors of contamination that the state alone can cure.

In many of these cases, political and ideological concerns in the information space run alongside issues of public harm that are real and compelling, helping to legitimize restrictive measures that can overreach.

“AI poisoning” is just the latest case in point. The potential for real harm to consumers is apparent. Shanghai-based Sixth Tone, under the state-run Shanghai United Media Group, reported following the March 15 CCTV spot that GEO services are sold openly on e-commerce platforms like Taobao and JD.com in China. Three-month subscriptions for the services range anywhere from 3,600 yuan (520 USD) to 32,800 yuan (4,765 USD). One provider told CCTV it had served more than 200 clients across multiple industries over the past year, guaranteeing top-three placement on any AI platform. The founder of Lisi Culture Media Co., Ltd, identified in the CCTV exposé only as “Li,” was upfront about the services, and how they have been welcomed on the sales side. “Every business loves it,” he told the broadcaster. “They all hope others won’t engage in AI poisoning, even as they themselves do it.”

Since gala night, the Apollo-9 smartwatch story has fired across the state media landscape in China. The Global Times (环球时报) — a tabloid spinoff of the People’s Daily (人民日报) known for its nationalist editorial line — quoted economists warning that unchecked GEO practices risk “distorting market competition and undermining public trust in AI as a reliable information source.” The 21st Century Economic Herald (21世纪经济报道) reported that the AI models most vulnerable to poisoning pull heavily from social media platforms like Douyin, Bilibili, and Baidu’s Baijiahao, places where content production barriers are low and commercial manipulation runs rampant.

The official Xinhua News Agency reported on March 21 that “GEO poisoning offers a glimpse of the challenges likely to emerge elsewhere as AI assistants spread.” But its headline cut unknowingly to the heart of the matter as it posed a completely disingenuous question: “[Who] guards the truth in China’s chatbot era?”

The answer to this question has never been in doubt. The CCP has never relaxed its claim to truth, and it has marshaled vast human and technological resources to ensure it continues to dominate public opinion in the interest of political and ideological security. Control of AI is now central to that objective, and the toxic truth behind the wave of state media concern over “AI poisoning” is that an even more insidious form of state manipulation now guides the very nature of AI models in China, which are hardwired with political bias — with implications even for global information integrity.

Nevertheless, the state media-driven consumer rights frenzy this month over “AI poisoning” speaks to a deep ambivalence in how China’s leaders have come to regard artificial intelligence, mirroring the ambivalence that has attended all developments in information technology since the rise of the internet. On the one hand, AI is the technological promise of the moment — and of the future. Premier Li Qiang’s government work report to the National People’s Congress (NPC) this month mentioned AI seven times directly, and at many other points indirectly, a signal of how central the technology has become to China’s national development agenda. On the other hand, AI is a nest of dangers, something that can be “poisoned” and turned against society — and, far more troubling to the leadership, against the interests of the Party itself.